Nvidia’s commitment to advancing artificial intelligence technology continues with the introduction of the KAI Scheduler. This Kubernetes-native GPU scheduling solution has recently become an open-source initiative under the Apache 2.0 license, showcasing Nvidia’s dedication to fostering a transparent and collaborative tech ecosystem. By making these tools available to the community, Nvidia is breaking down barriers and encouraging contributions that drive innovation in AI infrastructure. This move not only empowers developers and engineers but also serves to strengthen the ecosystem around Nvidia’s technologies, emphasizing a collaborative approach to problem-solving.

A Tailored Solution for AI Workloads

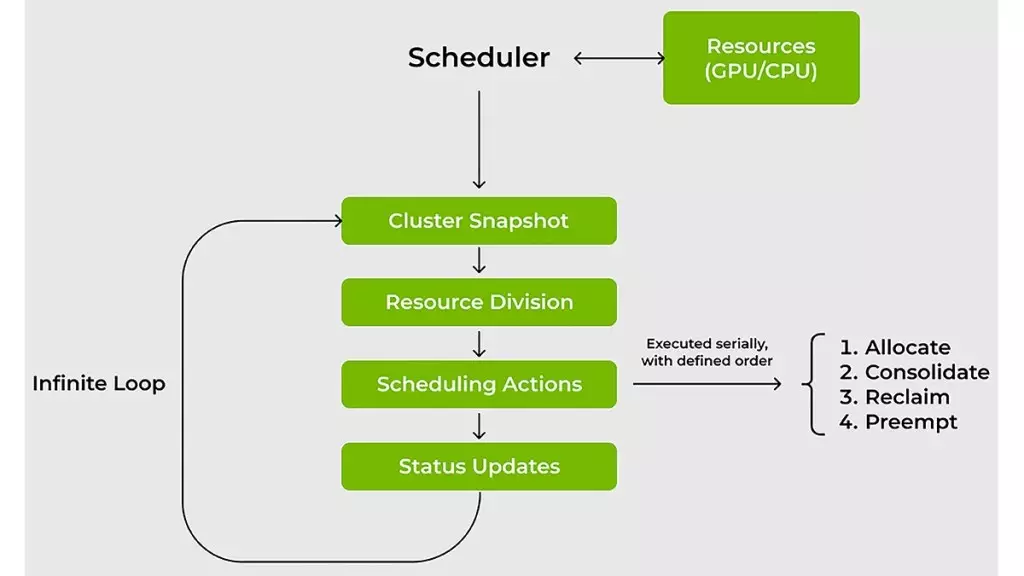

The challenges that accompany managing AI workloads on GPUs and CPUs cannot be overstated. Traditional resource schedulers often struggle with the variability of AI workloads, where resource demands can change on a dime. The KAI Scheduler was designed to specifically address this problem, offering a dynamic alternative that can seamlessly adapt to fluctuating GPU demands. In an environment where a data scientist might transition from using a single GPU for exploratory data analysis to requiring multiple GPUs for large-scale distributed training, the need for a highly responsive scheduling solution becomes clear.

With its ability to continuously recalibrate fair-share values and adjust quotas, the KAI Scheduler ensures that resources are allocated efficiently without requiring constant administrator oversight. This level of automation allows IT and Machine Learning (ML) teams to focus on their primary objectives rather than getting bogged down in resource management dramas.

Efficiency Redefined: A Scheduler for the Modern Era

The KAI Scheduler is fundamentally about saving time—the rarest and most precious resource in technology development. Featuring a combination of gang scheduling, GPU sharing, and a hierarchical queuing system, this tool significantly reduces the wait time often experienced by ML engineers. Imagine being able to submit multiple jobs and then confidently stepping away, knowing that the scheduler will prioritize tasks and allocate resources efficiently. This level of trust in the scheduling process is a game-changer, enabling teams to achieve faster results and iterate on their designs without stagnation.

Nvidia has employed two strategic approaches to ensure maximum efficiency across GPU and CPU workloads—bin-packing and spreading. The bin-packing method combats resource fragmentation by allowing smaller tasks to be allocated to partially utilized GPUs and CPUs, while spreading spreads workloads evenly across the resources to maintain high availability. This dual strategy prevents over-allocation and underutilization—a common pain point in shared cluster environments—by reallocating unused resources dynamically, ensuring that no team hogs capacity while others sit idle.

Removing Complexity in AI Development

The complexity of integrating various AI tools and frameworks has long been a barrier to rapid development. Traditional setups often require cumbersome manual configurations that delay project timelines. KAI Scheduler tackles this issue head-on with a built-in podgrouper that can automatically recognize and link workloads with popular tools such as Kubeflow, Ray, and Argo. Simplifying this process reduces the potential for error and accelerates development cycles, enabling teams to transition from prototyping to production more fluidly.

As researchers and ML engineers grapple with the need for efficient workflows and maximized output, such innovation could not come at a better time. The KAI Scheduler embodies a fundamental shift in how AI workloads are managed, emphasizing not only efficiency and speed but also collaboration and community-driven development.

A Bold Step for Nvidia and the AI Community

By open-sourcing the KAI Scheduler, Nvidia is not merely promoting its own tools; it is fostering a robust community where innovations can flourish. This initiative allows anyone to engage, contribute, and innovate, pushing the boundaries of what’s possible in AI infrastructure. The openness of this scheduling solution exemplifies a radical departure from traditional corporate strategies that tend to guard technological advancements closely.

As the industry continues to evolve, such initiatives will likely shape the future of AI development. With the KAI Scheduler leading the charge, teams now have access to powerful, flexible tools that can keep pace with the rapid-fire changes of AI workloads. This collaborative spirit inspires confidence that, together, we can create an environment conducive to groundbreaking advancements in technology.

Leave a Reply

You must be logged in to post a comment.