In recent days, Meta, the parent company of platforms like Facebook, Instagram, WhatsApp, and Messenger, has introduced a feature that allows users to engage with its AI tools directly within private chat conversations. This initiative, however, has sparked a multitude of concerns regarding user privacy and the handling of personal data. As Meta rolls out this new capability, it is essential to analyze the implications it carries for users and the overall landscape of digital privacy.

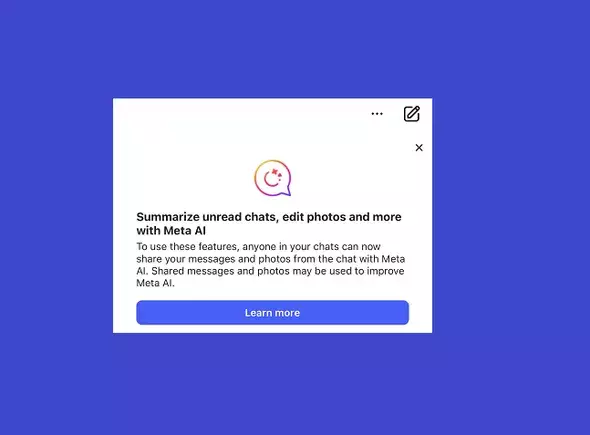

Meta’s latest addition is marketed as a tool for enhancing user interaction by allowing them to invoke Meta AI within ongoing conversations. The concept is simple: users can access AI assistance by mentioning @MetaAI in their chats. While this may seem convenient, it inadvertently raises eyebrows about user privacy. Meta’s warning about potential data sharing serves as a chilling reminder that the information contained in these conversations may be exposed to wider scrutiny. According to the company, messages and photos shared in these chats can potentially be utilized to train their AI models, which could ultimately compromise the confidentiality users have come to expect in their direct messages. The paradox here is glaring; in seeking to innovate by adding AI functionalities, Meta may be undermining the secure environment that private messaging is meant to create.

In the logo-laden world of social media, where users often prioritize convenience over caution, Meta’s introduction of AI features forces a critical reassessment of what digital privacy truly means. The pop-up notification prompting users to be mindful of sharing personal information is a telling indicator of the precarious line Meta is walking. While the company emphasizes that it systematically attempts to anonymize shared data by removing identifiable information, this assurance may not suffice in alleviating user concerns. The growing skepticism surrounding data protection raises valid questions: Are users fully aware of what they consented to when they signed up? How much of this intricate legal jargon actually informs users about the extent to which their data is collected and used?

Further complicating matters is the fact that opting out or avoiding AI utilization appears to be the only foolproof measure to safeguard personal information. This predicament places the onus on users to navigate their interactions with heightened scrutiny, heightening the risk of compromising their own experience for the sake of privacy. Furthermore, the mere existence of an AI “watchdog” in chats effectively changes the dynamics of communication, creating an unspoken fear that private exchanges may become fodder for AI algorithms.

The belief that users can protect their data by simply avoiding AI interactions in chats may be misleading. Deleting messages or opting not to invoke @MetaAI seems a straightforward solution, yet this does not address the overarching issue of data ownership. By agreeing to the service’s terms and conditions, users have unwittingly permitted Meta to utilize their data, which raises ethical concerns about informed consent. The reality is that user autonomy in these contexts is largely illusory, an uncomfortable realization in a world where data commodification is rampant.

Even though potential repercussions, such as the AI reconstructing personal information, might seem low-risk, it is prudent for users to remain vigilant. The technology is still emergent, and with millions of users interacting on these platforms, the likelihood of misuse or misunderstanding only grows. Therefore, if users are concerned about data exposure, the most sensible advice is straightforward: rely on direct queries to Meta AI outside of personal chats, thereby preserving the sanctity of private conversations.

As Meta continues to innovate and integrate advanced technologies into its messaging platforms, a broader discourse on digital privacy must take center stage. Companies in the tech space need to engage in transparent data practices and prioritizing user understanding must become paramount. For its part, Meta must navigate this new territory carefully, balancing the incorporation of AI innovations with the ethical obligations it has toward safeguarding user privacy. This is not merely about compliance with data regulations; it is ultimately about building user trust and consent in an age where digital interactions become ever more complex.

Thus, as we navigate the exciting yet fraught landscape of AI integration in personal spaces, it is essential for users to remain informed and proactive, ensuring that the balance between convenience and privacy does not tip unfavorably.

Leave a Reply

You must be logged in to post a comment.